Ship AI Features in Days, Not Quarters.

A fully managed retrieval-augmented generation (RAG) pipeline with APIs, large language model (LLM) flexibility and quality metrics you need to go from data to production without building the infrastructure yourself.

Key Benefits for AI/ML Engineers

- Go from data to deployed in a fraction of the time

- Build on a pipeline that’s already production-hardened

- Get full pipeline control through clean, documented APIs

Build It or Ship It?

Here’s what the engineering tradeoff actually looks like in production:

Building RAG Yourself

Implementing the Progress® Agentic RAG Solution

Weeks of Assembly Before You Ship

Many weeks assembling vector DBs, chunking logic and re-rankers

Full Pipeline Live on Day One

Fully managed pipeline that's production-ready for deployment via API on day one

A Custom Parser for Every Surprise

Custom parsers for every file type you didn’t anticipate

Every File Type, Handled Automatically

Multimodal ingestion handling text, PDF, audio, video and images automatically

Switching Models Means Rearchitecting

Tightly coupled to one LLM where switching means rearchitecting

Swap Any Model Without Touching Pipelines

OpenAI, Anthropic, Mistral or Google model swapping allowed without the need to touch pipelines

Key Use Cases

Accelerated RAG Deployment

Deploy a production-grade retrieval pipeline via API in days, not the months it takes to build one yourself.

LLM Orchestration & Evaluation

Run any LLM against your data with built-in REMi quality metrics, scoring relevance, groundedness and recall on every output so you can ship AI you can actually stand behind.

Production Monitoring & Observability

Cites every answer so it’s source-tracked and quality-scored, allowing you to audit, iterate and defend outputs before and after go-live.

Key Capabilities

Everything you need to build, tune and ship production-ready AI without infrastructure overhead.

End-to-End Modular RAG Pipeline

End-to-End Modular RAG Pipeline

Every component of a production RAG system in one fully tunable pipeline, no assembly required.

Semantic Chunking & Knowledge Graph

Meaning-preserving segmentation with entity recognition built in.

Model Context Protocol (MCP) Connectivity

Model Context Protocol (MCP) Connectivity

Live retrieval from any business app. No custom connectors needed.

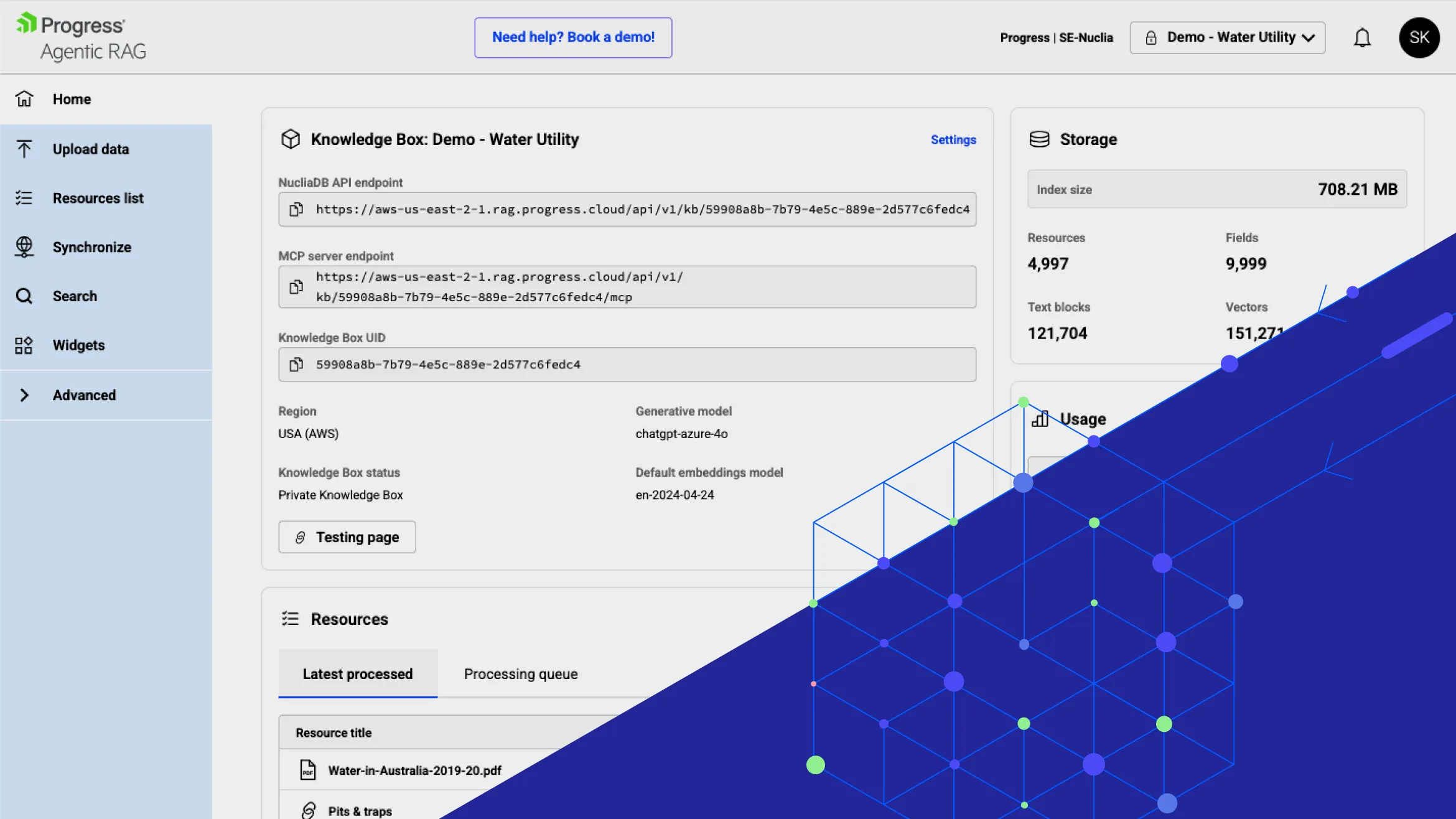

See It In Action

Build your knowledge layer once, then spin up grounded, traceable AI experiences against it again and again with the same APIs, same observability, with every use case. No bespoke pipelines. No rewrites. Start your 14-day free trial and make agentic RAG technology a repeatable part of your stack.

Integrations

Connects where your data already lives.

Data Ingestion

- Documents (PDF, DOC, PPT, TXT)

- Media (video, audio)

- Web content (HTML, knowledge bases)

- Scheduled imports

LLMs

- OpenAI

- Anthropic

- Amazon

- Meta

- Mistral

- Hugging Face

- DeepSeek

- Bring-Your-Own

Sync Agents

- AWS S3

- Microsoft SharePoint

- Google Drive

- Dropbox

- ShareFile

MCP

- Business applications

- CRM

- CMS

- Customizable retrieval

Start Building with Agentic RAG

Launch grounded, agent‑driven AI experiences faster—without managing retrieval infrastructure.

.png?sfvrsn=10dc3863_2)