RAG vs. Fine-Tuning: Choosing the Right AI Strategy for Your Data

Learn the difference between fine-tuning a model vs. using RAG and how to evaluate which is right for your use case.

What does it actually mean to make an AI system “know” your business?

Does it need to internalize your terminology and reasoning patterns at a deep level, baking them into its neural network weights? Or does it just need reliable, fast access to your documents so it can reference them when answering questions?

That distinction separates fine-tuning from Retrieval-Augmented Generation (RAG), and many teams don’t think it through carefully enough before committing time and budget.

Both approaches promise to connect general-purpose AI to your specific business context. Fine-tuning does it by retraining the model’s parameters on your data. RAG does it by giving the model real-time access to your knowledge base.

But the execution couldn’t be more different. Teams often hear “train it on our data” and assume fine-tuning is the answer, without considering whether retrieval would get them there faster and cheaper. Or they dismiss fine-tuning entirely without recognizing where it actually delivers better results than retrieval.

This article breaks down when each approach makes sense, what the real costs look like and how to avoid spending months building the wrong solution.

What Fine-Tuning Actually Does

Fine-tuning is simply the process of taking a pre-trained language model and retraining it on your specific data.

Technically, this means updating the model’s parameters (the weights in its neural network) based on your dataset. The goal is to adjust these internal weights so the model generates outputs that reflect your domain’s patterns, terminology and structure.

For example, if you’re a legal firm, you might fine-tune a model on thousands of your past case briefs. During this process, the model’s parameters are adjusted through additional training epochs, shifting its probability distributions to favor legal terminology, citation formats and argumentation styles specific to your practice.

It sounds useful. And in some cases, it is. But fine-tuning comes with baggage.

First, it’s expensive. You’re not just uploading data and hitting a button. You need to prepare training datasets, configure the training process, run compute-intensive jobs and test the results. For a 7B parameter model like Mistral, full fine-tuning can run into the low-five-figure range (with some estimates putting it around $12,000), while parameter-efficient methods like LoRA (Low-Rank Adaptation) typically land in the $1,000–$3,000 range depending on dataset size and training setup.

Second, it’s slow. Fine-tuning isn’t something you do once and forget. Every time your data changes—new products launch, policies update, customer preferences shift—you need to retrain the model. That could mean weeks or months between updates, depending on your dataset size and the computational resources available.

Third, it’s rigid. A fine-tuned model is locked into what it was trained on. If you want it to learn something new, you’re back to the beginning: preparing data, retraining, testing, deploying.

And here’s the part most teams don’t realize until it’s too late: fine-tuning doesn’t give a model a way to retrieve your data. Instead, the training data becomes absorbed into the model’s weights, shaping how it writes, reasons and answers questions.

So if you ask it something about a specific document, it can’t pull up the source or quote it reliably. It will generate an answer based on whatever patterns it learned during training, which may or may not be accurate. That’s where hallucinations creep in: the model sounds confident, but it’s reconstructing information from memory rather than grounding its answer in an actual document.

What RAG Actually Does

RAG takes a different approach. Instead of retraining a model, it connects the model to your data in real time.

Here’s how it works: When someone asks a question, the system first converts that query into a numerical representation called an embedding—a high-dimensional vector that captures the semantic meaning of the text.

The RAG system then searches your knowledge base (which has been pre-indexed using the same embedding model) to find documents with similar vector representations. This semantic search goes beyond keyword matching; it finds content that’s conceptually related, even if it uses different words.

The most relevant documents are retrieved and fed as context to the language model, which then generates an answer grounded in those specific sources. Unlike fine tuning, the model isn’t recalling patterns from training; rather, it’s reading and synthesizing information that was just handed to it.

This solves the core problems of fine-tuning:

- Speed: You don’t need to retrain anything. Upload new documents, and they’re immediately searchable and usable.

- Cost: No expensive training runs. You’re just retrieving and generating, which costs a fraction of what fine-tuning does.

- Accuracy: The model answers based on actual documents, not patterns it learned months ago. And because you get citations, you can verify the source.

- Flexibility: Add new data sources, swap LLMs or adjust retrieval strategies without starting over.

RAG doesn’t teach the model to be an expert in your domain. It gives the model expert sources to reference, which is often more valuable.

Technical Trade-offs at a Glance

This table highlights the core technical trade offs between fine tuning and RAG.

| Aspect | Fine-Tuning | RAG |

|---|---|---|

| Model modification | Updates neural network weights | Keeps base model unchanged |

| Data freshness | Static (locked at training time) | Dynamic (updates in real-time) |

| Latency | Lower (single inference call) | Slightly higher (retrieval + inference) |

| Context window usage | Efficient (no external context needed) | Consumes tokens for retrieved context |

| Hallucination risk | Higher (generates from learned patterns) | Lower (grounded in retrieved sources) |

| Transparency | Black box (can’t trace reasoning) | Transparent (citable sources) |

| Computational cost | High upfront, low ongoing | Low upfront, moderate ongoing |

| Update mechanism | Retrain entire model | Index new documents |

Where Fine-Tuning Still Makes Sense

RAG isn’t always the right answer. There are specific cases where fine-tuning is worth the investment.

If you need the model to adopt a very particular tone, style or format that’s hard to control with prompts alone, fine-tuning can help. For example, if you’re building a customer-facing chatbot that needs to sound exactly like your brand across thousands of interactions, fine-tuning might give you more consistency than RAG.

If your use case involves generating highly specialized outputs in a niche domain, like medical diagnostics or legal contract drafting, fine-tuning on domain-specific data can improve quality. The model learns the subtle reasoning patterns and domain conventions that prompts struggle to convey.

But even in these cases, you’re not choosing one or the other. Many teams use RAG to handle retrieval and grounding, then fine-tune the model to improve style or domain fluency. The two approaches can work together.

The Hidden Costs of Fine-Tuning

Let’s talk numbers for a second.

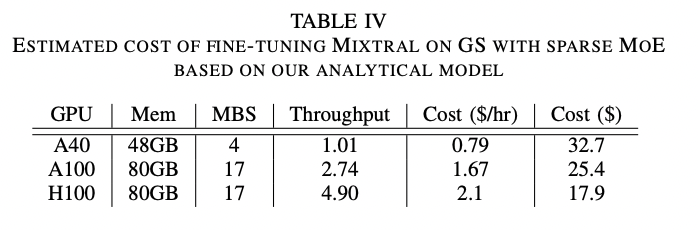

Beyond the direct training costs, fine-tuning brings hidden expenses that add up quickly. Even the research models this out: in one published analysis, fine-tuning a Mixtral-class model on a small dataset costs as little as $18–$33 depending on the GPU used, but scaling that same workflow to a realistic enterprise dataset (around 2 million queries) pushes the cost to roughly $3,460 on an H100.

And that’s just the compute bill. It doesn’t include the hours your team spends preparing data, testing configurations or retraining when requirements change.

Data preparation alone can consume weeks of engineering time. You need to clean your datasets, format them correctly, remove noise and verify quality. If your data isn’t already structured for training, you could be looking at a significant upfront investment before you even start the fine-tuning process.

There’s also the opportunity cost. While your team is focused on managing fine-tuning workflows, they’re probably not building new features, optimizing customer experiences or solving the next business problem. In fast-moving markets, that delay can matter more than the dollar amount.

And if the model doesn’t perform as expected? You’re back to square one, except now you’ve burned budget and time with nothing to show for it. Unlike RAG, where you can test and iterate quickly, fine-tuning failures are expensive lessons.

RAG avoids most of this. The setup is faster, the iteration is cheaper and the results are immediately testable. You’re not locked into a multi-week training cycle just to find out if something works.

A Simple Decision Framework

If you’re trying to decide between RAG and fine-tuning, ask yourself these questions:

- Does my data change often? If yes, RAG is almost always the better choice. Fine-tuning can’t keep up with frequent updates without constant retraining.

- Do I need the model to reference specific documents or data points? If yes, RAG. Fine-tuning doesn’t give you retrieval or citations.

- Am I trying to change the model’s tone, style or behavior? If yes, fine-tuning might help. But try prompt engineering and RAG first—they often solve the problem without the overhead.

- Do I have the budget and time for retraining cycles? If no, RAG. Fine-tuning requires both, and the costs add up fast.

- Do I need answers I can verify? If yes, RAG. It provides citations back to the source, which builds trust and reduces hallucinations.

- Is my use case domain-specific with stable knowledge? If yes and you need consistent stylistic control, consider fine-tuning. Otherwise, start with RAG.

- Do I have clean, labeled training data ready to go? If no, RAG is the easier path. Fine-tuning requires significant data preparation.

Most teams discover that RAG solves 80% of what they thought required fine-tuning. And for the remaining 20%, combining both approaches often works better than either one alone.

Why Progress Agentic RAG Makes This Easy

If you’ve been reading this thinking, “RAG sounds great, but I don’t have the resources to build it from scratch,” that’s where Progress Agentic RAG comes in.

Most RAG systems require piecing together a vector database, embedding model, chunking strategy, retrieval algorithm, and LLM connection. Progress Agentic RAG eliminates that friction with a complete RAG-as-a-Service platform.

The platform automatically indexes files and documents from any source and delivers verifiable, high-quality answers. You can index any type of data in any language, define your own retrieval strategy and choose from various embedding models. AI agents enrich your content by recognizing entities, classifying topics, and building knowledge graphs that map connections across your information.

The platform includes REMi, a proprietary RAG evaluation model that assesses answer relevance, context relevance and groundedness. You get quality metrics to verify that outputs are accurate and traceable back to source material.

Start with one use case like internal search or video indexing, then expand as you see value. Because it’s modular, each workflow builds on the same foundation without rebuilding infrastructure.

Progress Agentic RAG accelerates AI-readiness by 95% and can save over 80% compared to building it yourself.

The Bottom Line

Fine-tuning and RAG aren’t interchangeable strategies. They solve different problems.

Fine-tuning teaches a model new behaviors by updating its neural network parameters. RAG gives a model access to your data through intelligent retrieval.

For most teams, RAG is the faster, cheaper and more practical option. It delivers grounded answers, handles changing data effortlessly and helps avoid the maintenance burden of constant retraining. The transparency it provides through citations also builds trust in a way that fine-tuned models can’t match.

Fine-tuning still has its place, especially when you need deep domain specialization or consistent stylistic control that’s difficult to achieve through prompting alone. But it’s not the default answer, and it’s not worth the investment unless you’ve tried RAG first and found it lacking.

The most effective approach often combines both: RAG for grounding and data access, with lightweight fine-tuning for behavioral consistency. But start with RAG. It solves the majority of use cases with a fraction of the complexity.

Want to skip the build-it-yourself headache? Start with Progress Agentic RAG and have a working system in hours, not months. Or schedule a walk-through to see how it handles your specific data types and use cases.