Fast Performance with Distributed Output Cache in Sitefinity

Sitefinity CMS now supports distributed output cache out of the box, delivering up to 200 times better performance in some scenarios. Learn how to apply it easily and where you should use it.

If you haven’t already heard, the recently released Sitefinity CMS 11.1 introduces full support for distributed output cache, among other upgrades. A bold statement, matched by just a few other .NET CMS vendors in the industry, and barely anybody when it comes to the level of integration Sitefinity has to offer.

If you’re already familiar with the concept of distributed output cache, and, like us in the product team, are equally excited to see this feature coming to Sitefinity CMS, this blog post is for you. If you just saw the catchy title, and decided to read further, don’t stop midway—this blog post is for you as well. In the following lines we’re going to explain what Sitefinity CMS distributed output cache is and why you should care about it.

What is Output Cache?

Let’s start with a quick overview of output cache. To summarize Microsoft’s definition, output cache is an optimization mechanism that allows ASP.NET to send a pre-processed copy of a page instead of going through the full process of running DB queries, assembling the page and sending the output to the client. Output cache exists to reduce web server response time. It is the most important caching layer as it's the first one that gets hit by an incoming request and guarantees that content is served instantly from cache instead of being processed on the server every time.

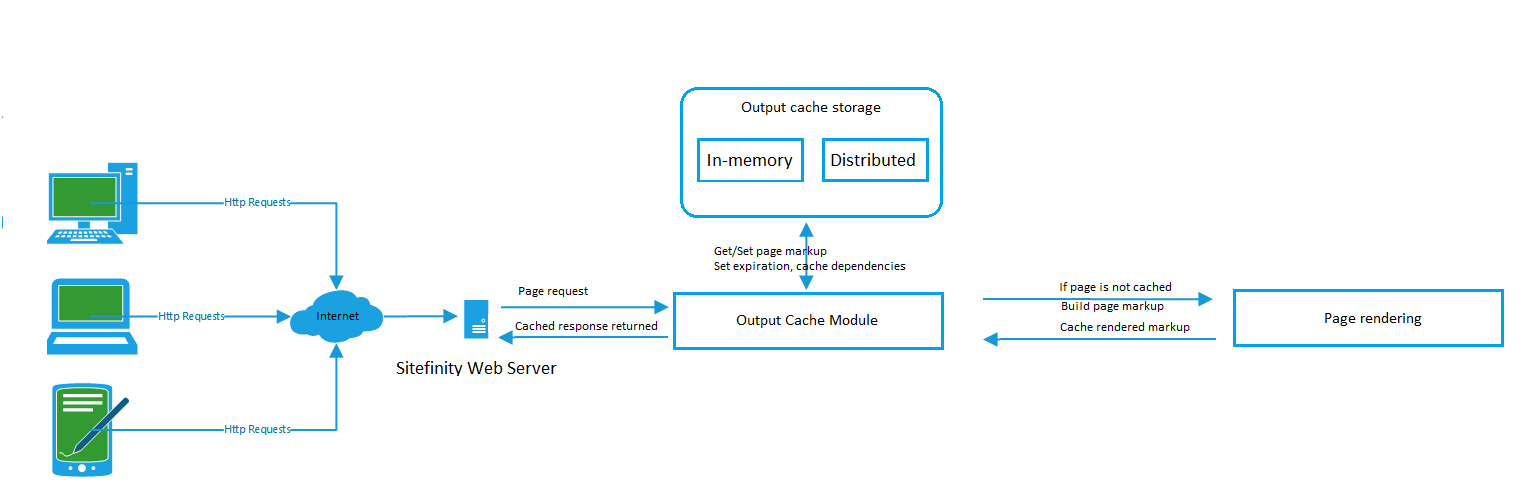

If we could draw a simple diagram that explains this concept, it would look something like this (click to see in full size):

By default, Sitefinity CMS uses the web server memory to store output cache items. This option provides the fastest possible speed for getting content from cache and delivering it to site visitors, thus delivering the best page response times. Regarding maintenance, in-memory output cache storage requires no extra configuration or maintenance, as server memory is used, thus being an easier solution. Although it affects the server memory consumption footprint, in-memory output cache does not require additional cost for external cache storage.

Why do We Need Distributed Output Cache?

Using distributed cache has several advantages compared to storing cache items in-memory. These advantages come from the different mechanism for reading and writing cache items. With distributed cache only the first load balanced node, which serves a request for content that is not yet cached, needs to process the content and store it in distributed cache. All consecutive nodes in the load balanced setup fetch the item from the distributed cache. This way there is no longer a need for each node to create its own cache versions of the content. The centralized distributed cache storage solves the following problems:

- Decreased CPU and Memory utilization on the web server nodes

- Pages load faster for the first request

- Cache data is not lost when the worker process recycles

- Reduced time to scale with an additional web server

To read more about the benefits of using distributed output cache, check out the Advantages of running Sitefinity CMS with Distributed output cache article from the official product documentation.

How Easy is it to Switch to Distributed Output Cache?

Distributed output cache doesn’t come as an add-on, community work, open source project, or any other format that would bring a somewhat dubious impression around the feature stability. It is a fully supported out-of-the-box feature. As a matter of fact, it takes a few seconds to configure on the Sitefinity side (add a few minutes on top of that for configuring the distributed cache storage of your choice).

It’s as easy as going to the Output Cache section in the Sitefinity CMS Advanced settings and selecting a Cache Provider value different than the default (InMemory).

When selecting a distributed output cache provider, you can choose between industry-recognized data storage options such as Redis and Memchached. Those of you who are already using Amazon services with Sitefinity CMS will be glad to see DynamoDB listed among the other supported distributed cache storages. Finally, it should come to no surprise, that an SQLServer option is there as well—a great fit for those who prefer to host the distributed cache storage locally.

Each of these options comes with its own advantages, and you can pick the one that best suits you and your business needs. All of them work great with Sitefinity CMS support for distributed output cache. And, again—overall time to configure is less than five seconds.

Once you’ve picked your preference, you need to configure the selected provider, so Sitefinity CMS can connect to your distributed cache store and start doing its magic. This is done form the CacheProviders section under Output Cache settings, and takes another five seconds to complete:

Is Distributed Output Cache the Right Choice for Me?

To understand more about the performance gains when using distributed output cache, read the official prodiuct documentation. It provides a series of detailed performance tests executed against the same Sitefinity CMS instance using InMemory or Distributed output cache providers. The tests cover a wide range of scenarios including startup, warmup, and load test to reflect the full scenario of a newly started/restarted site spinning up and warming up, as well as website browsing under load by site visitors. The documentation also provides a comparison of the pros and cons of InMemory and Distributed output cache, so you can make the right choice taking multiple factors into account, for example performance and scalability, and cost.

Show Me!

Let’s use the Redis provider for a quick practical example. We will use an active Microsoft Azure subscription and add a new Redis cache through the Azure management portal:

Make sure to configure your Redis cache in a Location as close as possible to your Sitefinity CMS web servers. When using distributed output cache, Sitefinity CMS reads and writes the cached items from the cache storage via web service communication, thus network latency is a critical factor in the performance of distributed output cache.

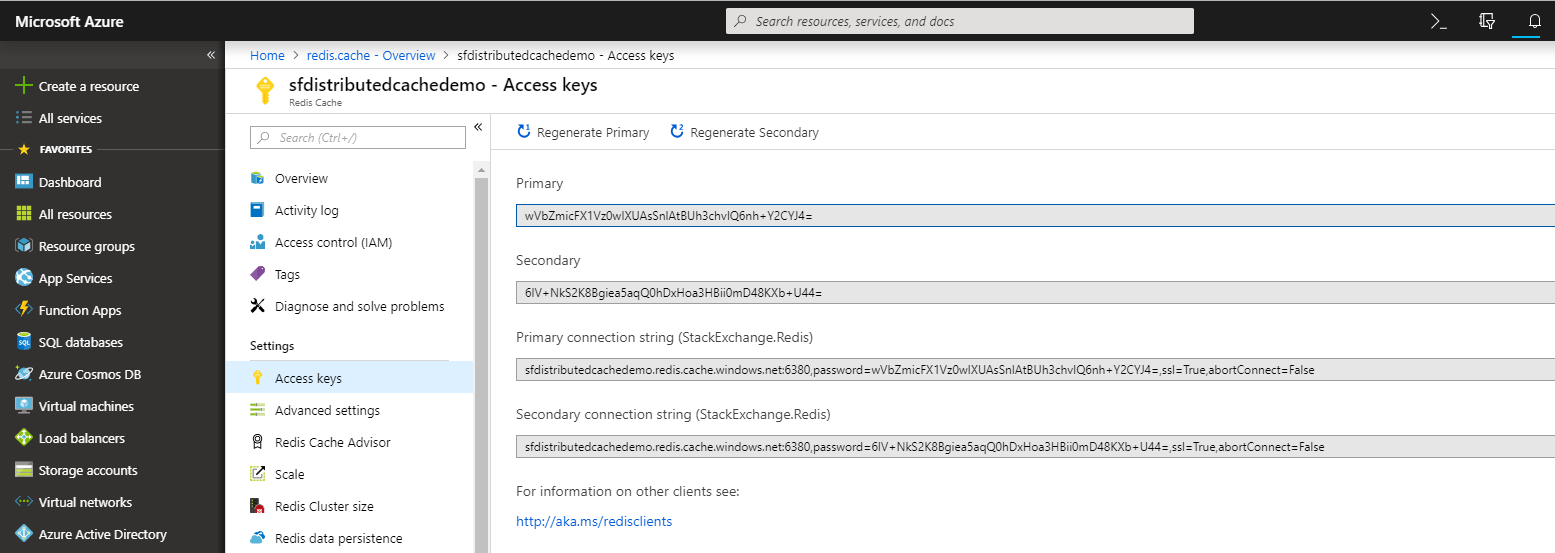

Once the new Redis cache is ready, you need to go to its settings page and open the Access keys configuration.

This screen contains the connection string which you need to configure for the Redis output cache provider in Sitefinity CMS:

Now that you have the provider configured, make sure Redis is the selected Cache provider in Output cache settings:

Voila! You’re good to go the moment you click the Save changes button. Your Sitefinity CMS uses Redis cache to store and retrieve the output cache items.

Conclusion

Choosing the right output caching configuration for your Sitefinity CMS is an important architectural decision and can have an impact not only on your site’s performance and scalability, but also affect your costs.

Using distributed cache delivers up to 200 times better startup and warmup performance for a “cold start,” when pages have not yet been compiled, and up to five times better startup and warmup when a node is restarted (pages have already been compiled). This results in improved scalability and high-availability, as well as a better browsing experience for users who hit a newly started node.

In-memory output cache storage certainly excels at providing the best average page response times once the site is warmed up. Distributed output cache still delivers very good average page response times, and so your overall performance can be affected positively based on the distributed cache storage used.

Now that Sitefinity CMS enables you to select between in-memory or distributed output cache storage, you can leverage these capabilities to fine-tune your caching strategy and optimize your sites performance.

Are you new to Sitefinity and looking to learn more? Request a demo today, or try Sitefinity CMS for yourself.