Reusable Decision Logic for Renewable Energy Sources

In the second post in this series, which began by analyzing the opportunities in renewable energy, we'll see how—without code—you can use business rules as an architectural layer to help justify any solar lending project.

In part one of this series, I outlined a few of the considerations for community and regional financial institutions (CRFIs) that are considering entering into solar and other renewable energy source lending markets. Next, I’m going to explore a few of the ways that lenders can help prospects to economically justify these projects with Progress Corticon, the digital decisioning software engineered to manage complex rules and calculations, integrate with a countless big data sources, and implement it all without writing a line of code.

Please note that this assumes some basic knowledge of the Corticon Studio interface and how REST APIs work—if you aren’t familiar with using Corticon, you can find quick-start tutorials here, or ask for help in the user community. To learn more about REST APIs, I recommend this blog from my colleague James Goodfellow.

To get started, let’s say I want to know the solar energy capture opportunity for a given location, in order to inform my marketing towards a specific local business—in this case, Progress Software. I’ll want to first ensure I have all necessary data about the Progress Software headquarters in Bedford, MA. I’ll do this using the free Google Maps Places API.

It is remarkably straightforward to access the Google Maps REST endpoint—as well nearly any other API I’m interested in getting data from—directly from my business rules. I can do this code-free directly within Corticon Studio using the embedded DataDirect Autonomous REST Connector, which will present REST services to Corticon as if they were relational databases. There’s no need for me as the rule modeler to understand the complexities of the JSON response to start effectively working with the data.

With that in mind, I’ve created a new blank Rule Project, and a corresponding blank Rule Vocabulary. Now, I’m going to add my Maps datasource, and automate the creation of my rule vocabulary based on the structure of the JSON endpoint.

I’m going to copy the base URL for my first REST call into the “REST URL” field. This request will retrieve information about Progress from Google Maps.

https://maps.googleapis.com/maps/api/place/findplacefromtext/json?input=14%20oak%20park%20drive%20bedford&inputtype=textquery&fields=place_id,name,geometry&key={YOUR_API_KEY}Once I’m ready, I’ll hit the ‘discover’ button. This tells Corticon to query the REST service using the REST URL and query parameters defined on the data source. The JSON returned will be used to generate a schema for mapping JSON from the REST service to a relational representation. The metadata for this schema is added to the Vocabulary for the Entities (tables), Attributes (columns), and Associations (join expressions). Let’s see if just “Progress” is enough to get us the data we need.

The Autonomous REST Connector maps the JSON in a REST source to a relational database schema to align with the Rule Vocabulary, and then translates SQL statements to REST API requests.

To save time, I’m going to use Corticon’s vocabulary generation accelerator to create my vocabulary based on the relational representation of the endpoint. That way, all of my vocabulary’s entities and attributes will be automatically mapped to populate with live data from the REST endpoint for each decision service request.

![]() After selecting the menu command Vocabulary > Populate Vocabulary from Datasource, a wizard opens to let you review the Datasource prior to creating the Vocabulary elements, where you can select the Tables and Columns to create as Entities and Attributes.

After selecting the menu command Vocabulary > Populate Vocabulary from Datasource, a wizard opens to let you review the Datasource prior to creating the Vocabulary elements, where you can select the Tables and Columns to create as Entities and Attributes.

Before I model rules, I’ll create a new Rule Flow, and add a Service Callout to my Rule Flow’s canvas and select the REST endpoint from the runtime properties dropdown.

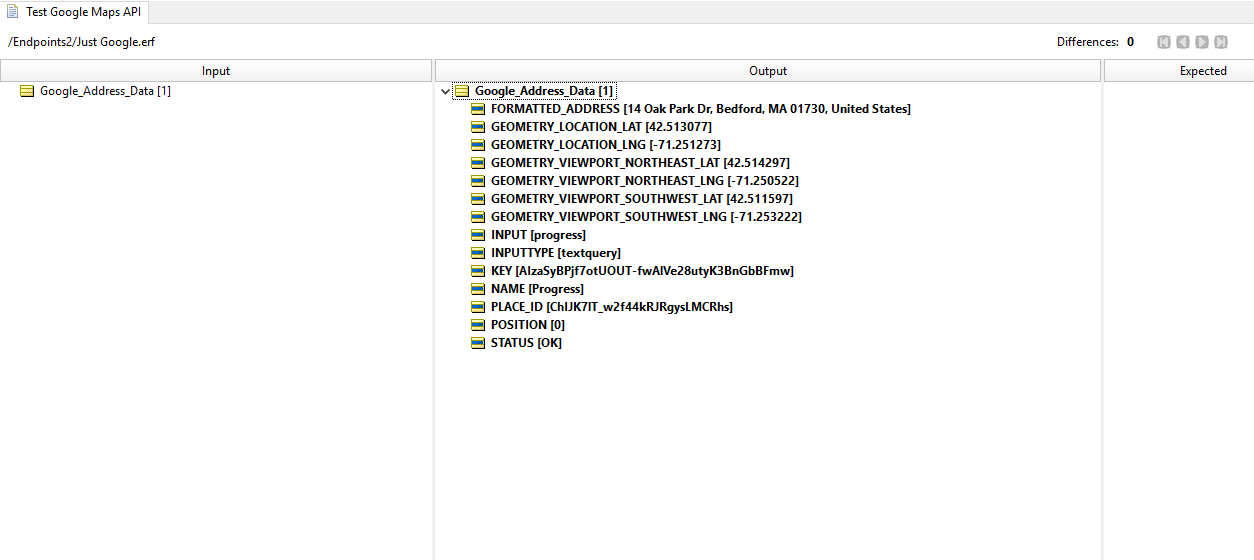

Next, I’ll run a new rule test against this rule flow, to ensure the data I’m looking for is being populated.

This checks out. The only data I needed to start with was the ‘input’ field for the Google Maps API request. Think of it like typing into the Google Maps search bar—even though Progress has offices around the world, Google doesn’t need more than my input of Progress Software Bedford.

Now, let’s figure out whether this is a worthwhile location for a solar energy project investment. I’ll be using a few sources to assist with this determination, and I’ll outline just a few of the myriad factors impacting solar energy yield.

The primary source of all energy to the Earth is radiant energy coming from the sun, which is measured by solar irradiance. NASA defines irradiance as the “amount of light energy from one thing hitting a square meter of another each second.” So, the most important variable here is the obvious one—how much solar energy is actually hitting the solar panels.

This is a complex variable to measure, let alone forecast, as not all rays of sunlight will go directly from the sun to a given point on Earth. It will often bounce between clouds or particles in the air first, before eventually making it down to Earth where it could be captured by a solar panel. The total of all irradiance that makes it to the Earth’s surface is known as Global Horizontal Irradiance. This measure encompasses both the sunlight that made it straight to Earth, and the sunlight that bounced around a bit before making it to Earth.

Source: National Renewable Energy Laboratory (NREL)

The National Renewable Energy Laboratory (NREL), a laboratory run by the US department of Energy, offers a free API that can tell us the average Global Horizontal Irradiance for a given latitude and longitude. The data is presented using the unit of measurement kilowatt hours per square meter per day. To get a baseline, I queried this endpoint with Postman using the latitude and longitude of Miami, FL.

https://developer.nrel.gov/api/solar/solar_resource/v1.json?api_key={API_KEY}&lat=25.7617&lon=-80.1918This query about Miami returns a few different measurements, but the one I’m concerned with is Average Global Horizontal Irradiance or “avg_ghi”:

"avg_ghi":

"annual": 5.17,

"monthly":

"jan": 3.87,

"feb": 4.55,

"mar": 5.64,

"apr": 6.54,

"may": 6.52,

"jun": 5.89,

"jul": 6.26,

"aug": 5.87,

"sep": 4.99,

"oct": 4.54,

"nov": 3.89,

"dec": 3.39

My assumption is that when I do the same for Bedford, MA, I’ll get lower values, as it tends to be much greyer here than in Miami. But instead of just doing another Google search for the latitude/longitude of the Progress headquarters, I want to be able to use my previous API call to the Google Maps endpoint to populate the latitude/longitude for me, so that I only need to provide one input, “Progress.”

I’ll do this by leveraging three REST endpoints, each tying back to one entity, my “Project_Site.” My first API call will be a Google Place Search, which will return me a PlaceID that I can then use in subsequent calls to other Google APIs. My second call will be to Google Place Details, where I’ll request data such as latitude/longitude for the given PlaceID. Finally, I’ll take this latitude/longitude output, and use it as the input to the NREL solar data API.

I don’t need to manually create or map my rule vocabulary to the data structure of these REST endpoints, I can use the vocabulary generation tool for this. This way, Corticon will also auto-generate the ‘join’ expressions that define the queries to the REST service. Even after connecting to and defining connections between my Rule Vocabulary and three different REST services, I only need two different entities in my vocabulary. At runtime, Corticon will go out and retrieve the data that corresponds to each of the attributes under the entities (Project_Site and NREL_SOURCES) from their respective datasources. With my vocabulary created and mapped, I’ll create a Rule Flow that will organize my REST calls, and add in a few rules to document the data being populated from these external services.

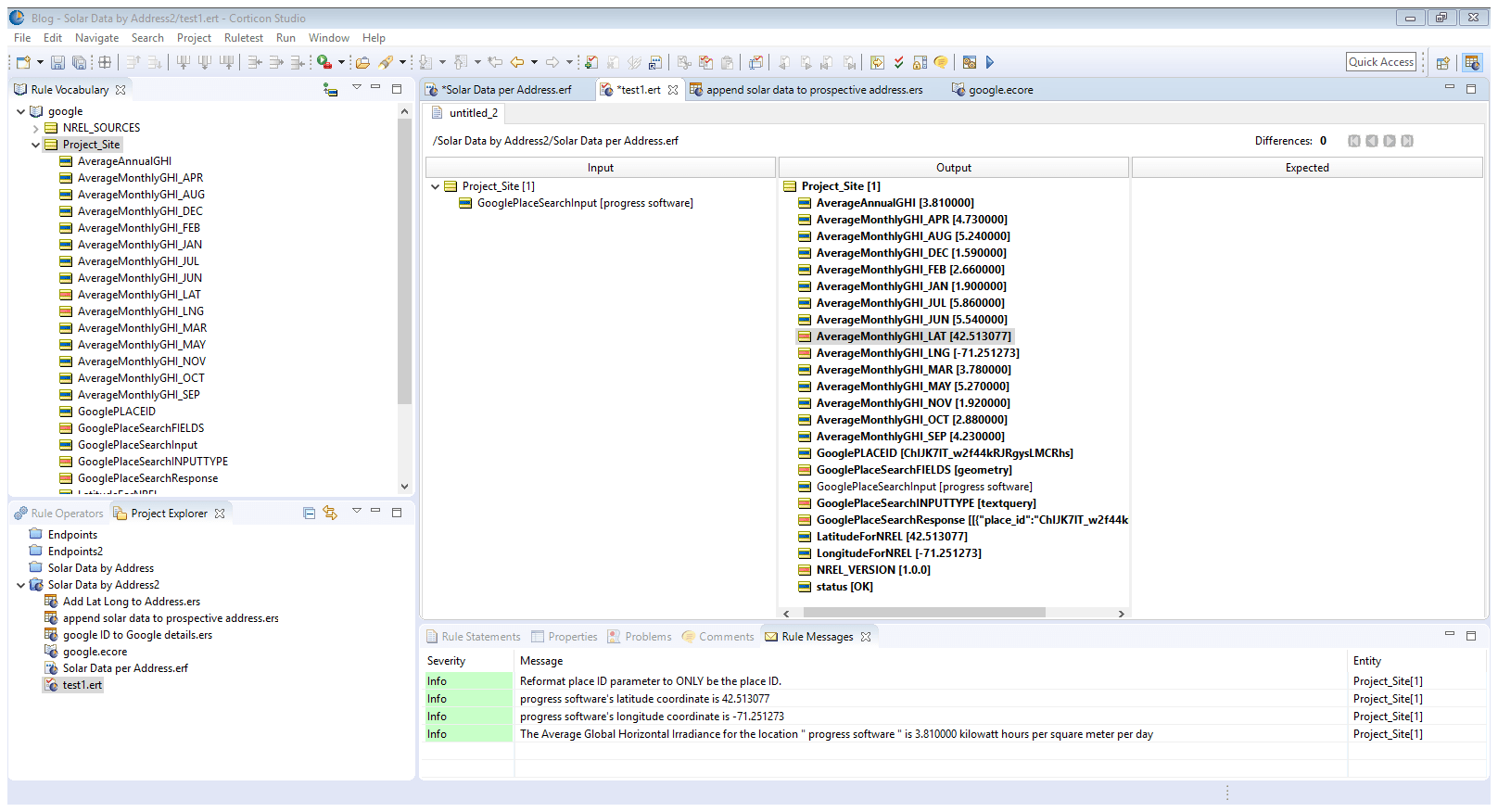

Now, I want to test to see if this is actually working as intended. As a reminder, I want to get data about the potential solar energy that could be captured for a given location, in order to inform ROI calculations for solar investments. Moreover, I want to do all of this by only inputting a search query for a location, as I would do in Google Maps.

It worked! As shown in the left ‘input’ column, the only data I provided in my Rule Test was my GooglePlaceSearchInput of “progress software.” This alone was enough to identify the site with a placeID, use the placeID to get the coordinates of the site, and then use those coordinates to retrieve the average global horizontal irradiance value for those coordinates by month and annually.

While it’s disappointing to see the much lower value than what I saw for the city of Miami, it certainly does not disqualify this as a site. I retrieved and organized all this data retrieved from various APIs into just one entity in my Rule Vocabulary, the Project_Site, all without writing a line of code or manually defining connections and mappings. I could go in countless other directions from here, considering how easy it is for me to keep leveraging these REST services that I don’t need to maintain myself. I could, for example:

- Target prospects in geographies with higher utility costs by leveraging data from the Open Energy Information U.S. Utility Rate Database

- Prioritize marketing toward companies that stand to benefit most from renewable energy, based on their location’s GHI measurements and additional factors defined in NREL’s REopt Lite API, such as their roof’s square footage or the number of acres of land they own

- Model business rules that incorporate complex eligibility calculations that go into each of the programs in the Database of State Incentives for Renewables and Efficiency (DSIRE), in order to pre-qualify prospects and quickly get through the origination process

- Work with one of the many states that are already using Corticon for defining eligibility requirements for Medicaid assistance programs, to develop and market Lower-Income Solar subsidy programs, leveraging business rules for eligibility determinations

- Assess market opportunity of lending mechanisms for other renewable energy sources such as wind, by incorporating data from NREL’s Wind Integration National DataSet

There are many complex variables involved with financing renewable energy investments, but the cost barriers to entry are far less of an encumbrance than they once were. Similar to how states run complex marketplaces to connect healthcare providers with members of government-subsidized health plans in the United States, marketplaces to connect consumers with government subsidized renewable energy funding are maturing nationwide. New entrants to these marketplaces can solidify their market standing by adopting the same technological principle used by the major stakeholders in the healthcare marketplaces: code-free, high performance, digital decisioning.

To see how Corticon can help you rapidly deliver projects like these, contact us to schedule a free live demo or sign up for a free trial today.