A Deep Dive Into the World of Data Science

Data science is a hot topic. We examine its history, from the beginning in 1962 to how it's used today—and explore why that matters to you.

What is data science and how is it used in companies day to day? It’s a hot topic. It seemed worthwhile to explore these questions and gain a deeper understanding of the data science ecosystem, why it matters to you now and what it will mean to you in the future.

The Beginning

The very first mention of data science is in 1962 when John W. Tukey wrote “The Future of Data Analysis.” He postulated that data analysis is intrinsically an empirical science, and went on to write about how important computer programs would become essential to the future of research. From there, computers and data processing did indeed become more and more essential.

The 70s saw the release of Peter Naur’s Concise Survey of Computer Methods, and the International Association for Statistical Computing was established. Data Science grew exponentially in the 90s and early 2000s into the sexy job that we are all jealous of today. See Forbes’ article on the history of data science for a more detailed look!

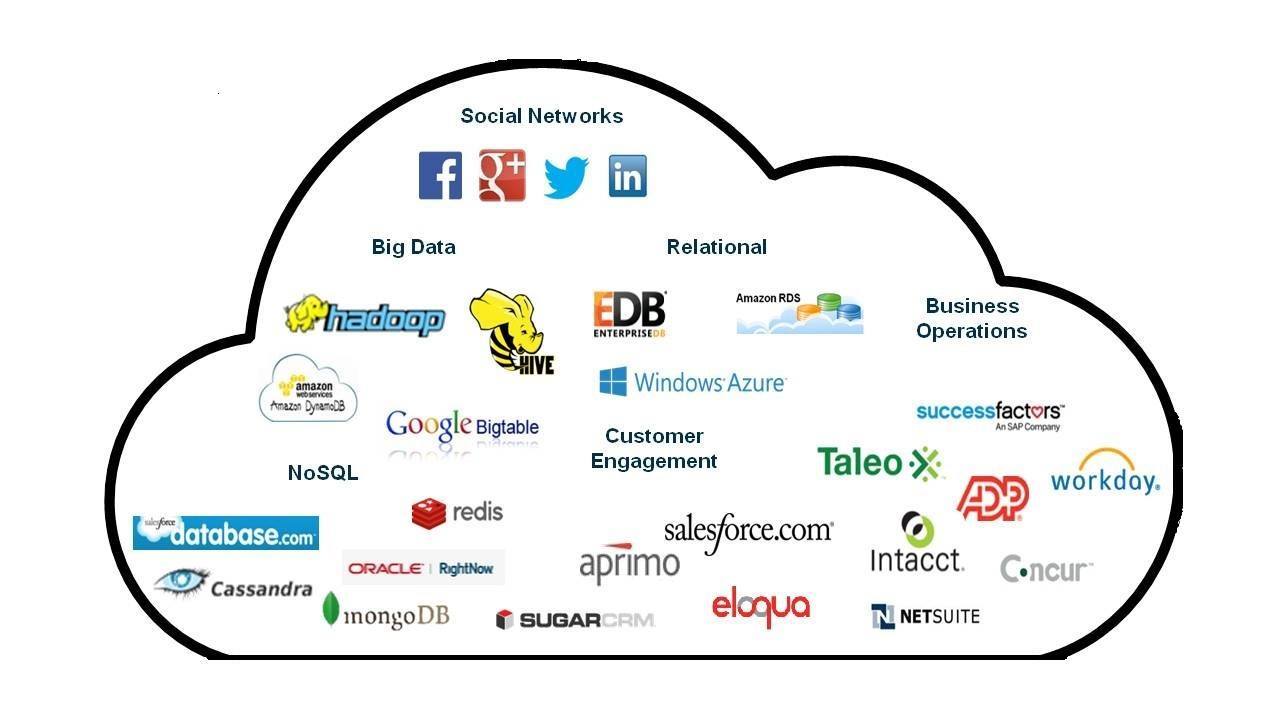

Snapshot of Today’s Ecosystem

Compared to the early days of data analysis, data science has grown immensely. Companies used to run out of storage room due to the high price of data storage, but storage and analysis costs have since fallen. Today enterprises are able to run regressions and discover trends using years of customer and external data sources. Data science is only possible through the use of quality data, however, so let’s break down where this data comes from and how to use it.

Data Sources

The distinction between statisticians and data scientists is that statisticians are given data and run regressions, while data scientists find the data, organize and analyze it, and then communicate the relevance in an understandable, actionable way to their organization. In order to have actionable data, data scientists need quality data—and that starts with quality sources.

Data sources are divided into three main categories: databases, applications, and third-party data.

Databases

Databases can be structured or unstructured. Structured databases run on SQL and store data in a finite number of columns. Generally, structured databases are used by organizations like banks, financial institutions, and operations that need perfect, reliable data.

Unstructured databases are much more flexible than structured databases. This allows for less friction when querying vast amounts of data and allows it to be examined in ways that structured data cannot. This comes with a sacrifice of perfection and complete consistency, but allows for some of the greatest recommendation engines such as Google and Yahoo.

Third Party Data

According to Bernard Marr, the first data center was built in 1965 by the US government to house 742 million tax returns and 175 million fingerprints. Since then government data has become one of the most reliable big data sources for research, and the practice of company investment in big data has become commonplace. Vendors like Amazon Web Services house massive volumes of public data, and others like Factual sell lucrative business data. If you have the integration and computing power, running regressions on massive amounts of data like this can offer valuable trends that save millions for large enterprises.

Applications

One of the first successful cloud applications was Salesforce, which launched in 1999 with the vision of creating viable enterprise business applications and data storage via the web. The idea of storing business data in the cloud and not on premise was ludicrous until Salesforce broke this barrier. Since then, cloud applications for storing and analyzing data have become as absolute must for most businesses.

The main drawback of using these applications is the fact that most of the time you don’t use just one. You may use Workday for HR management, Google Analytics to measure performance, Salesforce to track leads, or Marketo to crunch marketing data—and the list keeps going. These systems don’t talk to one another, and that’s where data integration comes into play.

Get the ROI on Your Data

Where do you even start with integration, when there are so many data sources with so many vendors and APIs? No one wants to deal with this daunting task, but it is essential for anyone looking for a return on investment from their big data sources.

Luckily our team at Progress DataDirect offers the most complete, robust, and versatile set of data connectors available. Our job and promise is to connect any data source to any application and give you the best customer service in the industry. Let us help you with your data migration, integration and management; we’re number one for a reason!

We have only discussed the basics so far—stay tuned for the rest of this series where we will hear from Justin Moore (Data Scientist, Progress DataDirect) and Sumit Sarkar (Senior Evangelist, Progress DataDirect) and delve deeper into the world of data science.

Suzanne Rose

Suzanne Rose was previously a senior content strategist and team lead for Progress DataDirect.