How Our Engineers Design Performance

In this blog series about performance, we talk about what’s happening in the industry and discuss data connectivity trends and tips. Past topics include OData, Database Wars and Data Lakes.

Deep Dive into Architecture

In today’s blog, we’ll dive a bit deeper to the next level and start talking about architecture. It is all about how we design our software for performance, and it’s the same kind of approach you likely use when you design your applications.

When we talk about designing for performance, one of the first things that my engineers will do in the performance lab is to count the number of calls through the stack from the time the application gets to us until we exit our piece of software and actually send a request over the socket. In other words, our database drivers sit right on the socket, so they are the last thing that you go through before you communicate to the database or the data source.

Fewer Dependencies Maximize Efficiency

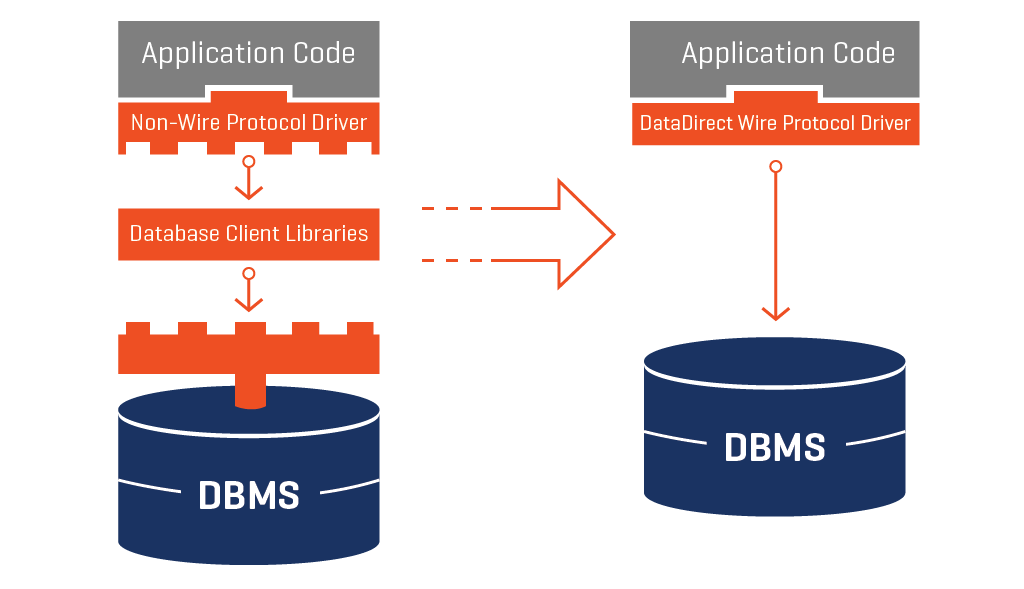

The way we used to develop connectors here was to build a driver, which was typically based on ODBC or JDBC standards and sat on top of the client libraries. This driver would then do a translation before it got to the database itself.

As an architect, you would look at this and think: “Wow, that’s a lot of layers and a lot of dependencies. The code in those client libraries is not code that you own, so you would have to wonder, “What were the compiler options used when you generated that? How many layers thick is that before you get down to the wire? What’s going on behind the scenes?”

A Better Way

|

Our architects soon determined there was a better way. We set about taking the idea of the client library and squishing it together with the top part. This resulted in just one layer underneath the application code that directly goes to the database or the data source, such as Salesforce or similar. So there are fewer layers to go through and you eliminate dependencies on third parties.

Why This Matters

Since the database driver itself (or other piece of connectivity software) sits directly on top of your TCPIP sockets and your operating system, it is able to go directly to the source. This lets us do some really interesting things, like utilizing some C++ constructs that have come out in the last few years for concurrency models and shift workloads among different processors, taking advantage of your thread pulls. All of these techniques are what we use in the wire protocol drivers to be able to make sure that we’re delivering the most performance possible.

Fastest in the Industry

The drivers my team and I build here at Progress® DataDirect® are designed to be the fastest in the industry, and we stand behind that claim with award-winning technical support. When you find performance degradation issues in our software, we treat them like defects because we understand how important speed is to your business. You can pick up a free trial today and try it out for yourself, or watch a replay of my webinar, “Industry Insight: Optimizing Your Data for Better Performance.” Don’t forget to check back for the next installment of this series!