Import Salesforce Data in to Google BigQuery using Google Cloud DataFlow

Introduction

Google Cloud Dataflow is a data processing service for both batch and real-time data streams. Dataflow allows you to build pipes to ingest data, then transform and process according to your needs before making that data available to analysis tools.

In this tutorial, you'll learn how to easily extract, transform and load (ETL) Salesforce data into Google BigQuery using Google Cloud Dataflow and DataDirect Salesforce JDBC drivers. The tutorial below uses a Java project, but similar steps would apply with Apache Beam to read data from JDBC data sources including SQL Server, IBM DB2, Amazon Redshift, Eloqua, Hadoop Hive and more.

Install Progress DataDirect Salesforce JDBC driver

- Download DataDirect Salesforce JDBC driver from here.

- To install the driver, you would have to execute the .jar package and you can do it by running the following command in terminal or just by double clicking on the jar package.

java -jar PROGRESS_DATADIRECT_JDBC_SF_ALL.jar

- This will launch an interactive java installer using which you can install the Salesforce JDBC driver to your desired location as either a licensed or evaluation installation.

- Note that this will install Salesforce JDBC driver and bunch of other drivers too for your trial purposes in the same folder.

Setting up Google Cloud Data Flow SDK and Project

- Complete the steps in Before you begin section from this quick start from Google.

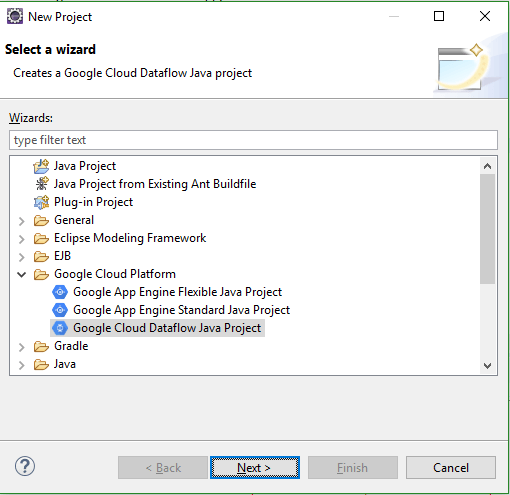

- To Create a new project in Eclipse, Go to File ->New -> Project.

- In the Google Cloud Platform directory, select Google Cloud Dataflow Java Project.

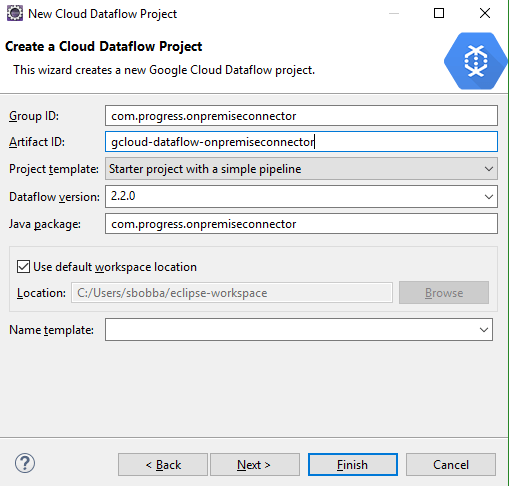

- Fill in Group ID, Artifact ID.

- Select Project Template as Starter Project with a simple pipeline from the drop down

- Select Data Flow Version as 2.2.0 or above.

- Click Next and the Project should be created.

- Add the JDBC IO library for apache beam from maven and DataDirect Salesforce JDBC driver to the build path. You can find the Salesforce JDBC driver in the install path.

Creating the Pipeline

- In this tutorial the main goal will be to connect to an Salesforce, read the data, apply a simple transformation and write it to BigQuery. The code for this project has been uploaded to GitHub for your reference.

- Open the StarterPipeline.java file and clear all the code in main function.

- First thing you need to do is Create the Pipeline. To Create the Pipeline:

Pipeline p = Pipeline.create(PipelineOptionsFactory.fromArgs(args).withValidation().create()); - This will be using input arguments to the program to configure the Pipeline.

- Connect to Salesforce via DataDirect Salesforce JDBC driver and read the data using JdbcIO.<T>read() method to a PCollection as shown below.

PCollection<List<String>> rows = p.apply(JdbcIO.<List<String>>read().withDataSourceConfiguration(JdbcIO.DataSourceConfiguration.create("com.ddtek.jdbc.sforce.SForceDriver","jdbc:datadirect:sforce://login.salesforce.com;SecurityToken=<Security Token>").withUsername("<username>").withPassword("<password>")).withQuery("SELECT * FROM SFORCE.NOTE").withCoder(ListCoder.of(StringUtf8Coder.of())).withRowMapper(newJdbcIO.RowMapper<List<String>>() {publicList<String> mapRow(ResultSet resultSet) throws Exception {List<String> addRow =newArrayList<String>();//Get the Schema for BigQueryif(schema ==null){schema = getSchemaFromResultSet(resultSet);}//Creating a List of Strings for each Record that comes back from JDBC Driver.for(inti=1; i<= resultSet.getMetaData().getColumnCount(); i++ ){addRow.add(i-1, String.valueOf(resultSet.getObject(i)));}//LOG.info(String.join(",", addRow));returnaddRow;}}))

- Once you have the data in PCollection, apply transform and hash the data in any cloumn in the data(commented) as shown below by using ParDo to iterate through all the items in PCollection.

.apply(ParDo.of(newDoFn<List<String>, List<String>>() {@ProcessElement//Apply Transformation - Mask the EmailAddresses by Hashing the valuepublicvoidprocessElement(ProcessContext c) {List<String> record = c.element();List<String> record_copy =newArrayList(record);//String hashedEmail = hashemail(record.get(11));//record_copy.set(11, hashedEmail);c.output(record_copy);}})); - Then, convert the PCollection which has each row in format of List<String> to TableRow Object of BigQuery Model.

PCollection<TableRow> tableRows = rows.apply(ParDo.of(newDoFn<List<String>, TableRow>() {@ProcessElement//Convert the rows to TableRows of BigQuerypublicvoidprocessElement(ProcessContext c) {TableRow tableRow =newTableRow();List<TableFieldSchema> columnNames = schema.getFields();List<String> rowValues = c.element();for(inti =0; i< columnNames.size(); i++){tableRow.put(columnNames.get(i).get("name").toString(), rowValues.get(i));}c.output(tableRow);}})); - Finally, write the data to BigQuery using BigQueryIO.writeTableRows() method as shown below.

//Write Table Rows to BigQuerytableRows.apply(BigQueryIO.writeTableRows().withSchema(schema).to("nodal-time-161120:Salesforce.NOTE").withWriteDisposition(BigQueryIO.Write.WriteDisposition.WRITE_APPEND));Note: Before you run the pipeline, Go to BigQuery Console and create the table with same schema as your Salesforce Table.

- You can find all the code for this project in GitHub for your reference.

Running the Pipeline

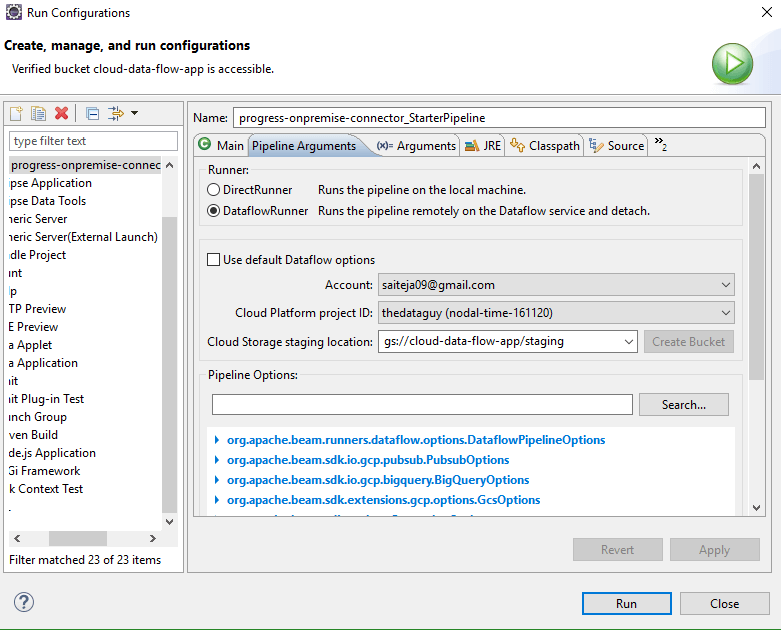

- Go to Run-> Run Configurations. Under Pipeline Arguments, you should see two different options to run the pipeline.

- DirectRunner – Runs the Pipeline Locally

- DataFlowRunner – Runs the Pipeline on Google Cloud DataFlow

- To Run locally, set the Runner to DirectRunner and run it. Once the pipeline has finished running, you should see your Salesforce data in Google BigQuery.

- To Run the pipeline on Google Cloud Data Flow, set the Runner to DataFlowRunner and make sure that you choose your account, project ID and a staging location as shown below.

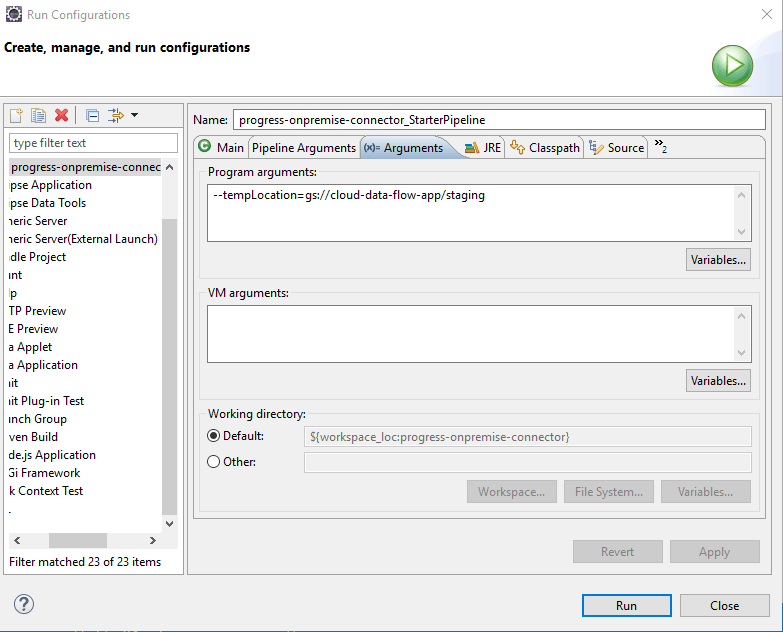

- Under Arguments -> Program Arguments, set the path to tempLocation for BigQuery Write to store temporary files as shown below.

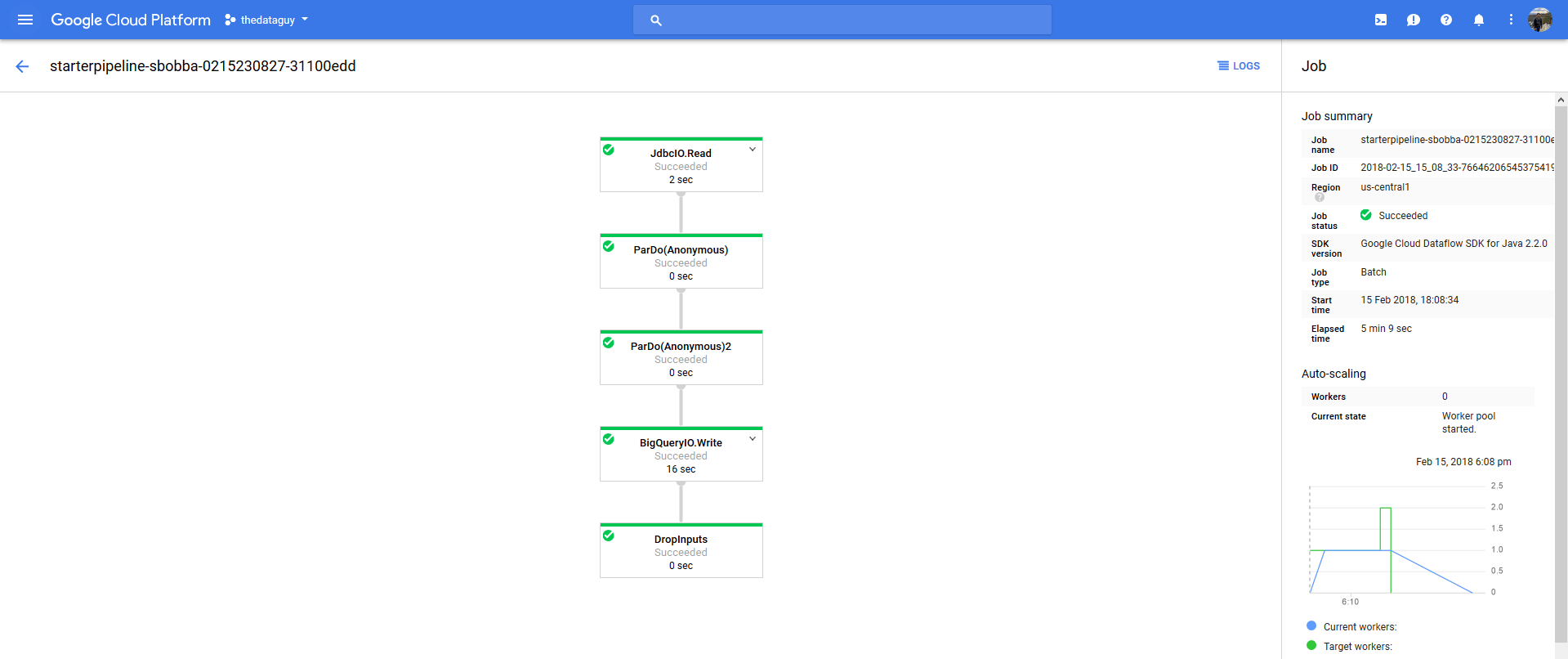

- Click on Run and you should now see a new job in Google Data Flow console starting. You can track the progress by clicking on the job and you should see a flow chart to show status of each stage.

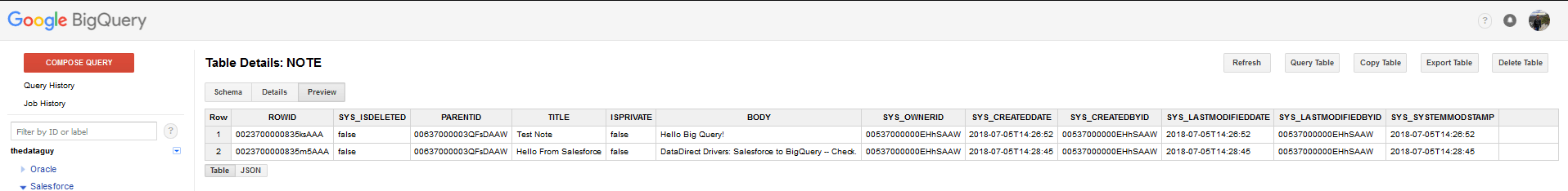

- Once the pipeline has run successfully, you can go to Google BigQuery console and run a query on table to see all your data.

We hope this tutorial helped you to get started with how you can ETL Salesforce Data in to Google BigQuery using Google Cloud data flow. You can use similar process with any of the DataDirect JDBC drivers for Eloqua, Oracle Sales Cloud, Oracle Service Cloud, MongoDB, Cloudera etc. Please contact us if you need any help or have any questions.