Determining the relevant facts surrounding an important situation is never easy. Getting agreement as to their interpretation is even harder. Documenting the journey for others to learn from – well, there’s rarely enough time for that, right?

Yet determining facts and what they mean underpins so many critical aspects of modern, digital life – “the data was there, why wasn’t it acted on?”

It’s simple economics. Raw data is relatively inexpensive. Skilled interpretations, less so.

It is now not difficult to acquire rich data we couldn’t even imagine a short while ago. Acquiring smart people to make sense of it all – that’s getting more difficult. And the “hire more smart people” approach by itself doesn’t scale well, unless they have the tools to work together effectively.

We want to get from data to knowledge, insight to action. How do we get better at that?

Today’s mainstream approach – using smart people – runs into serious problems as complex data sources multiply and required expertise grows. The people themselves are very smart and knowledgeable, but don’t have an easy way to formalize and share their knowledge.

Step inside any larger organization, and you’ll find multiple, critical functions, each with their own version of needing to interpret facts in the form of complex data, and what those facts mean.

That knowledge – those facts and interpretations – wants to be shared with other functions. Research wants to share with new product introduction teams, intelligence agencies want to share among themselves, risk-sensitive organizations want to share knowledge of what it means to be compliant at all times, and so on.

How do you get teams of very smart people to work together over a shared database of facts along with what the facts mean?

A quick example will help illustrate the challenge.

John Is the Parent of Sue

The above statement is a fact – a piece of data – easily enterable into your choice of application or database. But what does it exactly mean?

To begin with, what do we really know about “John”? What assertions can we make as to gender, age, and so on? And what do we also know about that “Sue” entity?

It starts to get fun when we start parsing “is parent of”. What is the exact relationship? Biological, financial, legal, religious, custodial? Something else? And by what authority?

It starts to get fun when we start parsing “is parent of”. What is the exact relationship? Biological, financial, legal, religious, custodial? Something else? And by what authority?

And what do those definitional terms mean in our context? Are we a bank, a school, a social services agency, a health clinic, an airline, an intelligence agency – something else?

If “John is the parent of Sue” is the database fact, the “what it means” is the shared interpretations of the entities and relationships, what they mean to us in our contexts, what those terms mean, and so on.

You can see that there is substantial “embedded knowledge” required to properly interpret that statement – or any statement – in context.

Simply put – now we have a fact, and what we know about that fact. If we can encode it and share it, others will benefit from this known and agreed interpretation of “X is parent of Y.”

This can be essential organizational knowledge, especially if your team is making critical decisions that involve precise and nuanced definitions of parental status, such as often arises in social services.

Indeed, most teams will develop deeply specialized knowledge over time – that’s the idea. Dense jargon will appear to more precisely define entities and relationships, with the new language being used in very precise ways.

Many Facts, Many Meanings

In our organizations, we have many digital facts with many meanings. We use databases to ingest, store, manage and protect those digital facts, but not what the facts mean, as facts often mean very different things throughout a complex organization.

An example would be someone making an online purchase of a new phone. While that might sound simple, it quickly unfolds into a very long list of important business processes, each interpreting that seemingly simple fact quite differently:

- The fulfillment function will care about the phone getting delivered, getting the consumer using the phone and related issues.

- The product management function will be very interested in the demographics of who bought the phone, the exact feature set of the new phone, and any usage data for this category of consumer.

- The supply chain function may need to order more of that type of phone, or substitute similar if availability is an issue.

- The network planning function is keeping an eye on aggregate device demand for 5G.

- The credit function is watching usage vs. payment.

- The customer service function will want a history of all interactions with this customer, and so on.

- The transaction will need to be auditable, compliant, might be subject to subpoena, etc.

One digital event – a simple phone purchase – can have many, many interpretations over time. Also, in a modern context, these different functions might be done using business partners.

While the facts might be connected, the interpretations usually aren’t. For example, the network planners should know that product planning thinks the purchaser won’t be using much 5G data.

Shared interpretations are shared knowledge. Familiar forms of communication – spoken, written, visual – is what we use to share knowledge today. But that doesn’t scale well.

Sharing facts – relatively easy.

Sharing interpretations and meanings – historically difficult.

Sharing facts and their meanings as one entity – potentially game changing.

Sharing Facts, But Not Meanings

Data sharing has been around with us since the dawn of computing itself. Whether that takes the form of querying primary systems, receiving reports, searching data marts or whatever – digital facts by themselves are generated and shared using a wide variety of approaches that continue to evolve.

But the digital sharing of meanings and interpretations – semantics – is comparatively recent. Siri, for example, interprets things in a very constrained and general purpose way. We don’t expect Siri to know what a zero coupon bond might be, or a protease inhibitor.

But semantic AI technology can quickly be taught the practical meaning of such complex concepts. It does this by interpreting human statements, and generating metadata much the way humans do.

Much as you would instruct a new hire about important terms and concepts – and what they mean – semantic AI software can do much the same thing when instructed by a subject domain expert.

Much as you would instruct a new hire about important terms and concepts – and what they mean – semantic AI software can do much the same thing when instructed by a subject domain expert.

This encoded knowledge is stored as metadata – information about information, or – in this case – what we know about the data, its conceptual definition and its relationships with other data concepts that we are using to interpret the data.

Ideally we would store the meaning of data – encoded as metadata – together with the data itself. That way, anyone who wanted access to the data would get its full context – everything that is known about it, how it is known, when it was known, and so on.

While that might sound like a simple idea, it is rarely put into practice.

A familiar example would be the data marts found in most larger organizations. Grab any report or data dictionary – what do those hundreds or thousands of different terms really mean? Which set of terms, concepts and definitions is right for my situation?

Facts and Meaning As One

If we take a lifecycle view of a given piece of data, it would start with ingestion from an external source, or perhaps creation via an application of some sort. A digital event happens, we want to make a record of it – who, what, when, etc.

Very often, it involves a person codifying data from a source document (such as a contract, research report, bulletin, message or similar) in the form of complex data.

Very often, it involves a person codifying data from a source document (such as a contract, research report, bulletin, message or similar) in the form of complex data.

The initial evaluation is important to many: is there potentially something important in this data, or can this new piece of information be safely ignored or handled later?

Imagine receiving a health record, an email from an important client, a new research report, or perhaps a report from the financial markets.

To make that decision, the new facts must be connected to existing facts and their interpretations, something that we all naturally do.

That all-important base of known facts and interpretations needs to be built, maintained and improved. New interpretations of existing facts will be created, representing new knowledge. “Know-how” becomes quantified by the richness of the shared interpretations created. Intellectual capital becomes an improvable, investable asset.

When data is being consumed for any purpose, it can now be consumed in the context of everything that is known about the data. Search goes from frustrating to informed. Applications go from dumb to rich context. Analytics go from crunching abstract numbers to better understanding grounded facts.

Smart People Working Together

You see this specific pattern everywhere – teams of very smart people using whatever tools are at hand to help interpret facts and what they might mean. This needs to happen both within functions (such as research teams) and also across functions (acting on the knowledge of others).

But it’s clearly not scaling well as we get deeper into the digital era. We have no shortage of digital facts. Useful interpretations are scarce, though.

It helps to consider what has evolved relatively quickly in so many situations. Imagine a typical team of smart people working on something important.

How much complex data did that team need to interpret and act on five years ago?

How much now?

How much five years from now?

10x? 100x? Perhaps more?

With the digital fact supply now essentially unconstrained, we are now asking people to interpret a vastly larger number of digital facts. Some are important, many are not, so quality matters. New tools will be required, as spreadsheets and analytics can only do so much.

Even if these small, nimble teams succeed internally, that’s not enough. We want others to act on their insights, adding their unique knowledge and lens – and often in situations where decisions of consequence are being made.

If we ask ourselves, “how well are we doing in helping people to create reusable knowledge?” the answer wouldn’t be positive.

If we ask ourselves, “how well are we doing in helping people to create reusable knowledge?” the answer wouldn’t be positive.

Not because the people aren’t good, it’s because the familiar data-only model isn’t scaling well. And most knowledge management efforts are divorced from the facts that created them,and end up being of limited use.

In the digital era, data is cheap, interpretations are expensive.

At some point, simply hiring more smart people by itself isn’t the answer. Indeed, history has shown that it often achieves the exact opposite effect. Without an agile “data and what we know about it” platform, fragmentation of meaning increases, leading to new problems.

You See It Everywhere

Anywhere decisions of consequence are being made, you can easily find this problem. New pieces of complex data have to be ingested and understood: is this important or not?

If you are in life sciences research, and ~1,000+ potentially relevant research papers are published daily, how do you stay ahead?

If you are in fraud, security, or intelligence, you live in fear of missing the signal in all the noise, yet being oversensitive will burn people out.

The same for financial services, logistics, and a wide variety of organizations with very different missions, but identical problems when viewed this way.

The same for financial services, logistics, and a wide variety of organizations with very different missions, but identical problems when viewed this way.

It’s not hard to make the case that interpreting potentially important information – and safely ignoring the rest – is one area where better approaches to sharing “facts and what they mean” can have a fantastic impact.

But there is another very important area where “facts and what they mean” can have an enormous impact, and that’s sharing important knowledge across organizational boundaries. If you’ve ever worked in a large, complex organization, you know this can be a very difficult problem.

For example, if your company is building something very critical and complex – say passenger aircraft or a new therapeutic – the shared knowledge around how one builds that aircraft or therapeutic becomes very critical in and of itself.

For example, if your company is building something very critical and complex – say passenger aircraft or a new therapeutic – the shared knowledge around how one builds that aircraft or therapeutic becomes very critical in and of itself.

That knowledge influences every part of the organization: research, design and test, manufacturing, customer operations, lifecycle, compliance and more.

A knowledge-centric business or organization will eventually demand a shared platform to share facts and what are known about them. The same could be said for financial services, specialty consulting, research or any pursuit where deep domain knowledge is what enables the product or service.

Facts and What They Mean

Facts and what they mean turn out to be very important indeed in the digital era.

In future articles, we’ll be exploring the concept of a semantic database – a database that stores complex data alongside semantic interpretations of what the data means.

We’ll show how a semantic database creates data agility by connecting active data, active metadata and active meaning.

We’ll show how a semantic database creates data agility by connecting active data, active metadata and active meaning.

Data agility is the ability to make simple and powerful changes to any aspect of how information is interpreted. By removing friction, it changes what is possible.

And, perhaps more interesting, we’ll share how real-world organizations are using semantic database concepts and data agility to change the way that they work with complex information, and are producing transformational results in the process.

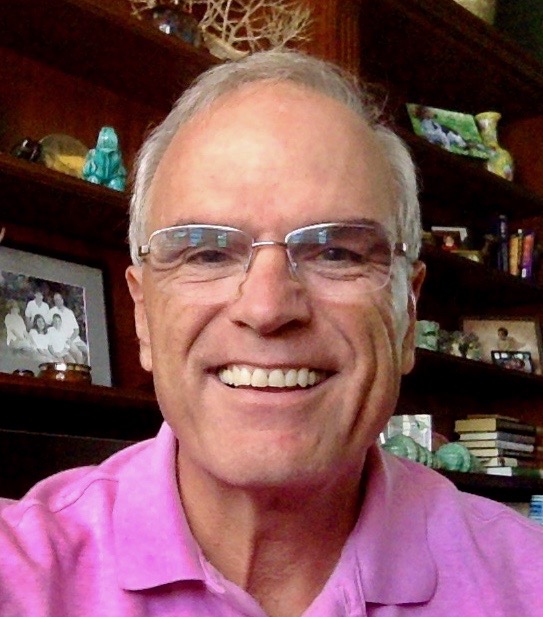

Chuck Hollis

Chuck joined the MarkLogic team in 2021, coming from Oracle as SVP Portfolio Management. Prior to Oracle, he was at VMware working on virtual storage. Chuck came to VMware after almost 20 years at EMC, working in a variety of field, product, and alliance leadership roles.

Chuck lives in Vero Beach, Florida with his wife and three dogs. He enjoys discussing the big ideas that are shaping the IT industry.